Why intelligence and experience determine whether AI helps or harms

Last week I used an AI model to help me turn a client requirements session into a technical specification — the kind of task where you need to identify what was left unsaid, spot gaps the client cannot see, and design for failure modes that only experience teaches you to anticipate. Some of what the model suggested was useful, some was off the mark because it lacked context that only exists in the heads of people who have worked with this client for years. I pushed back where my experience told me the model was wrong, and the result was tighter than what I would have produced alone — precisely because I could evaluate its suggestions against my own judgment.

Later that same day, I needed a quick summary for an internal meeting. I handed the model three bullet points, pasted the result into an email without reading it closely, and moved on. I did not engage with the content at all.

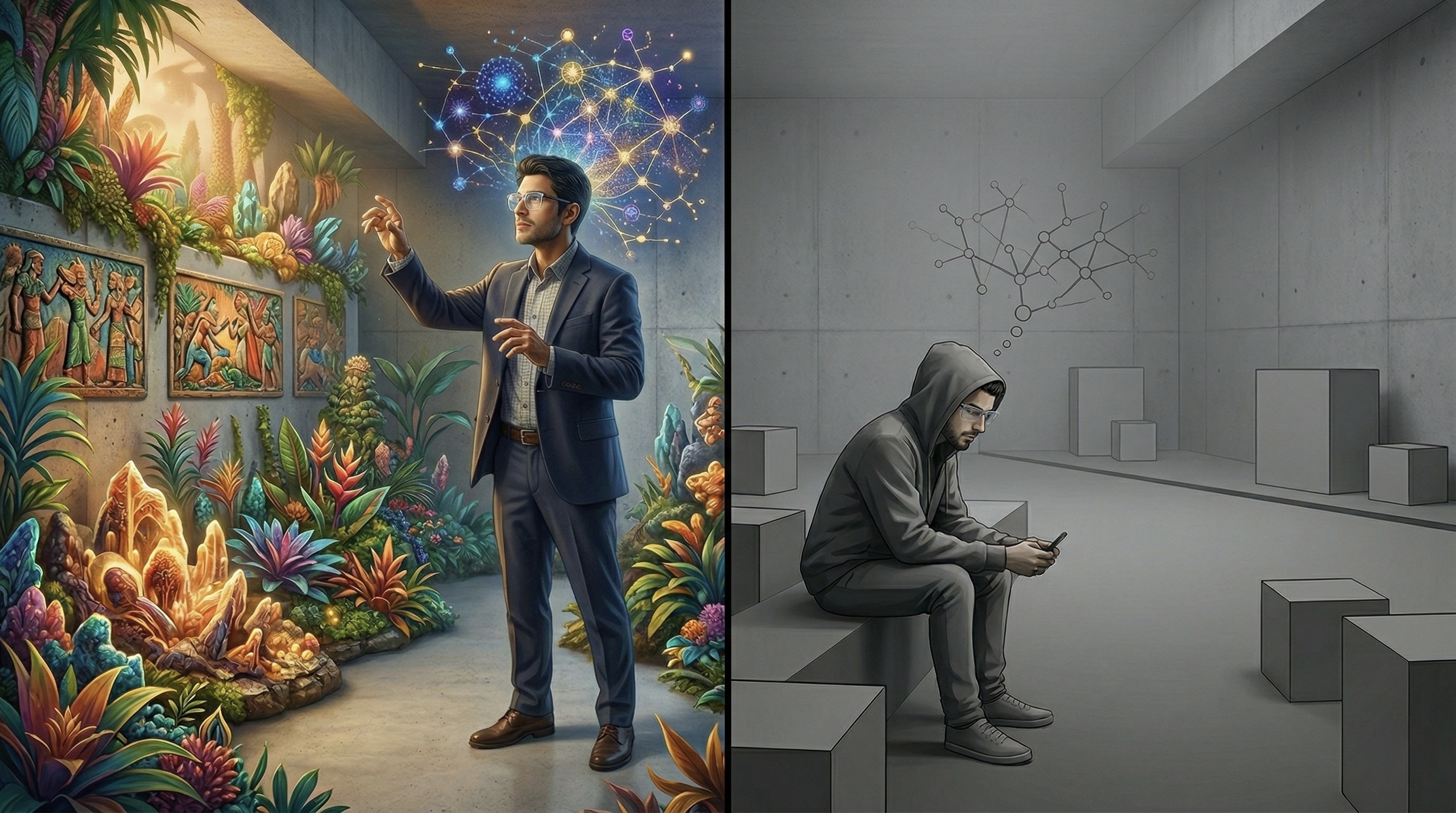

Same person, same tool, a few hours apart. In the first case, AI sharpened my thinking. In the second, it replaced it.

That afternoon crystallized something I had been noticing for a while. In conversations with colleagues and clients across industries, I kept seeing how many people had drifted into using AI purely to make things easier — accepting polished-looking output without scrutiny, whether out of time pressure or simple convenience. Some were experienced professionals who possessed the judgment to evaluate what the AI produced but rarely exercised it anymore. Others, particularly younger colleagues, seemed never to have developed that judgment at all. It was this second group that made me want to look more closely at what the research says about what is actually happening inside our heads when we use these tools.

What determines which mode wins

Research over the past two years points to a clear answer, and it has less to do with the technology than you might expect.

A major study by Microsoft Research and Carnegie Mellon University, based on 936 real-world AI-assisted work tasks across professions, found that people who were confident in their own professional abilities applied more critical thinking when working with AI — sometimes exceeding the level of scrutiny they brought to the same kind of task without AI. They challenged outputs, caught subtle errors, and used the model as a sparring partner rather than an oracle, because their competence and experience gave them the reference frame to notice when something was off. Those who lacked that reference frame showed the opposite pattern: they tended to trust the AI more, accepted plausible-sounding output without questioning it, and could not reliably distinguish genuine insight from confident-sounding nonsense. This held true even when they were aware, in principle, that AI makes mistakes. Knowing that a model can hallucinate is one thing. Having the depth in your own field to recognize where, specifically, it is hallucinating is something entirely different — and that depth is not acquired by reading a disclaimer.

A study at the MIT Media Lab offers a glimpse of what happens when a person has not yet developed the ability to critically assess, differentiate, and question independently before AI enters their workflow. Students who wrote essays with ChatGPT showed significantly reduced brain connectivity, and most could not recall their own writing minutes after producing it. The critical finding came in a crossover phase: when students who had worked independently for months were given AI, they produced better work with higher cognitive engagement. When students who had used AI from the start were asked to work alone, they showed markedly weaker performance and lower cognitive engagement. The capacity that independent effort would have built had, it appears, not developed to the same degree. The researchers call it "cognitive debt," and the troubling part is that it persisted even after the AI was removed. The brain, having adapted to reduced engagement, did not simply return to its previous level of activity.

The neurobiological explanation is well established: cognitive competence is built through effort, not through exposure. The brain strengthens neural pathways only when it struggles — what learning psychologists call "desirable difficulties." AI, by design, eliminates that struggle. The smooth, efficient experience of AI-assisted work feels productive but may quietly erode the very competence it depends on.

The automation researcher Lisanne Bainbridge identified the structural version of this problem decades ago: when you automate routine work and leave only the exceptions for the human operator, you deprive them of the practice they need to handle exceptions well. A pilot's value does not lie in managing the normal flight but in intervening when the autopilot fails. That intervention requires competence built through practice. Without practice, the pilot may not even recognize the moment when intervention is needed — and by the time the failure becomes obvious, it is too late to act.

Starting conditions

If the shield against cognitive decline is earned judgment, the question becomes where that judgment is supposed to develop. The answer, for the generation now entering the workforce, is worth examining closely.

PISA 2022 delivered the lowest scores ever recorded across all three tested domains. In Germany, the decline relative to 2018 exceeded 25 points in mathematics — roughly a full year of lost learning. Part of this decline is almost certainly attributable to COVID-related school closures and the improvised shift to remote learning, though the OECD's own analysis found no systematic correlation between the duration of school closures and the size of the performance drop, suggesting that the pandemic accelerated a trend rather than creating one. Neuroscientist Jared Cooney Horvath, testifying before the U.S. Senate in 2025, called Generation Z the first in modern history to score lower than its parents on standardized assessments, and described them as the victims of a failed experiment in placing technology in classrooms without a pedagogical strategy. The United States spent over 30 billion dollars on school laptops and tablets. The measurable cognitive return was negligible.

Generative AI arrives into precisely this landscape — a generation that, as the PISA data and Horvath's analysis suggest, has already gone through one round of technology-driven cognitive change before encountering tools that can perform the thinking itself. Whether one attributes the measured declines primarily to digital media, to pedagogical failures, or to some combination of both, the starting conditions are concerning: the cognitive abilities that critical AI use depends on — the capacity to question, to evaluate, to distinguish between plausible and accurate — are measurably weaker than they were a decade ago, and the education systems tasked with building them are not delivering.

And the PISA data reveals where the resulting divide already runs: in Germany, privileged students outperform disadvantaged ones by 111 points in mathematics, a gap wider than the OECD average. A cognitive divide along socioeconomic lines already exists before AI enters the picture. AI is likely to deepen it, because the protective factor — the competence and experience that enable critical evaluation — is distributed along exactly the same socioeconomic lines.

Where this leads if nothing changes

If these abilities remain as underdeveloped as they are, the most probable outcome is that critical thinking consolidates as a privilege of those who acquired it before AI became ubiquitous. People who developed strong professional judgment through family environments that model questioning, through education that demanded intellectual effort, or through years of professional experience are far more likely to use AI as an amplifier. Those who had fewer opportunities to build that judgment are at much higher risk of drifting into substitution without recognizing the difference, because cognitive erosion does not feel like loss — it feels like getting things done faster. The risk is not that AI makes everyone less capable. It is that it widens the gap between those who can evaluate what AI produces and those who cannot — under the quiet illusion that access to knowledge is the same thing as the ability to use it.

Avoiding that outcome would require something that has historically occurred only in response to crisis: deliberate structural reform of education systems. The habits that critical thinking later builds on — questioning, forming independent judgments, tolerating the discomfort of not having an immediate answer — would need to be cultivated from primary school onward, across subjects rather than in isolated programs. AI-free phases in early education would need to ensure that the ability to think independently is developed before the tool enters the curriculum. Where AI is introduced, it would need to be designed to ask questions rather than deliver answers. And the cognitive bar would need to be raised, as it was when calculators arrived in the 1970s and schools responded not by banning them but by making the problems harder.

Whether education systems can adapt quickly enough is an open question. Education policy, particularly in federal systems like Germany, moves in cycles of years to decades. AI adoption moves in months. That mismatch alone makes it unlikely that systemic reform will arrive in time for the generation currently in school.

What you can influence

None of this is an argument for using AI less. My specification was better because of AI. What matters is not how much you use the tool but whether you are aware of which cognitive mode you are in — and whether you are choosing it or drifting into it.

Thinking through a problem before opening the chat window, even briefly, activates the neural pathways that keep your judgment sharp. Treating AI output as a first draft to be challenged rather than a finished product to be accepted changes the cognitive posture entirely. And simply noticing the moments when you stop reading closely — not to judge yourself for it, but to recognize the pattern — is itself a form of the metacognitive awareness that the research identifies as protective.

For those who work with or raise the next generation, the question of sequence becomes concrete: whether a young person's first encounter with a professional challenge involves struggling through it with their own resources or delegating it to a model is likely to shape how their brain develops the capacity for independent judgment. The MIT crossover study suggests that this sequence is not a matter of pedagogical preference but a neurobiological reality.

AI amplifies what is already there. That is what I take away from my own experience, and what is reflected in the research. If you bring intelligence and experience to the encounter, AI can make you considerably more productive. Your intelligence allows you to question what the model produces, your experience tells you which of those questions actually matter. But where neither intelligence nor experience are sufficiently developed, AI is likely to erode the little capacity that exists — because the cognitive effort that would have built those faculties over time gets skipped entirely.

Whether the generation now entering school will develop the intelligence and experience needed to use AI as an amplifier rather than a substitute may turn out to be one of the more important education questions of the coming decade. And it is one that has so far received less attention than it deserves.

References

Lee, H.-P. H., Sarkar, A., Tankelevitch, L., Drosos, I., Rintel, S., Banks, R., & Wilson, N. (2025). The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers. CHI Conference on Human Factors in Computing Systems (CHI '25), Yokohama, Japan. https://www.microsoft.com/en-us/research/publication/the-impact-of-generative-ai-on-critical-thinking-self-reported-reductions-in-cognitive-effort-and-confidence-effects-from-a-survey-of-knowledge-workers/

Kosmyna, N., Hauptmann, E., Yuan, Y. T., Situ, J., Liao, X.-H., Beresnitzky, A. V., Braunstein, I., & Maes, P. (2025). Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task. arXiv preprint arXiv:2506.08872. https://www.media.mit.edu/publications/your-brain-on-chatgpt/

Bainbridge, L. (1983). Ironies of Automation. Automatica, 19(6), 775–779.

Bjork, R. A. (1994). Memory and Metamemory Considerations in the Training of Human Beings. In J. Metcalfe & A. Shimamura (Eds.), Metacognition: Knowing about Knowing (pp. 185–205). MIT Press.

OECD (2023). PISA 2022 Results (Volume I): The State of Learning and Equity in Education. OECD Publishing, Paris. https://doi.org/10.1787/53f23881-en

OECD (2023). PISA 2022 Country Profile: Germany. https://gpseducation.oecd.org/CountryProfile?primaryCountry=DEU&treshold=10&topic=PI

Horvath, J. C. (2025). Written testimony before the U.S. Senate Committee on Commerce, Science, and Transportation. Referenced in: Fortune, February 2026. https://fortune.com/2026/02/21/laptops-tablets-schools-gen-z-less-cognitively-capable-parents-first-time-cellphone-bans-standardized-test-scores/